For instance, better Euclidean solutions can be found using k-medians and k-medoids. k-means clustering minimizes within-cluster variances ( squared Euclidean distances), but not regular Euclidean distances, which would be the more difficult Weber problem: the mean optimizes squared errors, whereas only the geometric median minimizes Euclidean distances. This results in a partitioning of the data space into Voronoi cells. This alternative approach is referred to as Scheme 2.K-means clustering is a method of vector quantization, originally from signal processing, that aims to partition n observations into k clusters in which each observation belongs to the cluster with the nearest mean (cluster centers or cluster centroid), serving as a prototype of the cluster.

Classify a new feature x to the class of the closest prototype.Associate the prototype with the class that has the highest count. For each prototype, count the number of samples from each class that are assigned to this prototype.Apply k-means clustering to the entire training data, using M prototypes.During the classification of a new data point, the procedure then goes in the same way as Scheme 1. The dominant class with the most data points is associated with the prototype. To associate a prototype with a class, we count the number of data points in each class that are assigned to this prototype.

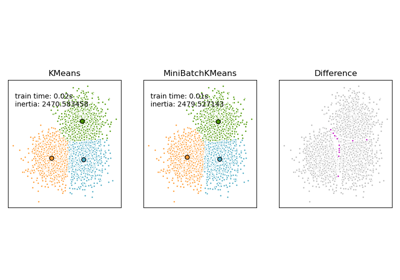

There's no guarantee that points in the same group are of the same class because we conducted k-means on the class blended data. The second scheme of classification by k-means is to put all the data points together and perform k-means once. Another Approach for using K-Means - Scheme 2 Because we have more than one prototype for each class, the classification boundary between the two classes is connected segments of the straight lines, which gives a zigzag look. The region around each prototype is sometimes called the voronoi region and is bounded by hyperplanes. Every prototype occupies some region in the space. The decision boundary between any two prototypes based on the nearest neighbor rule is linear. Specifically, these are the classification boundaries induced by the set of prototypes based on the nearest neighbor. The black lines are classification boundaries generated by the k-means algorithm. Then, depending on the color code of that prototype, the corresponding class will be assigned to the new point. We can see below that for each class, the 5 prototypes chosen are shown by filled circles.Īccording to the classification scheme, for any new point, among these 15 prototypes, we would find the one closest to this new point. The authors applied k-means using 5 prototypes for each class. There are three classes green, red, and blue. This above approach to using k-means for classification is referred to as Scheme 1.īelow is a result from the textbook using this scheme.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed